Reducing Event Sourcing Complexity to Boost Product Velocity

I won’t go into great detail about why event sourcing is hard. Generally, it represents a significant paradigm shift from the way typical CRUD-like applications are built and introduces high technical complexity. Especially for startups, opting for this architecture comes with a big cost since it slows down how fast developers are able to ship.

Achieving benefits without slowing down

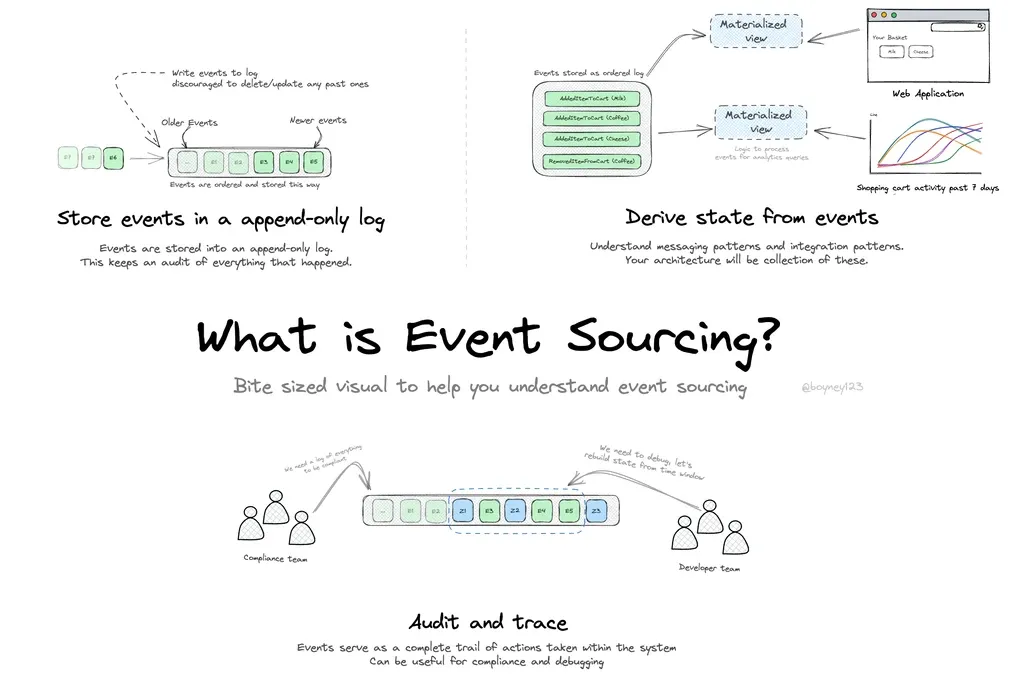

At the core of event sourcing is the event log - a record of immutable facts that document every change to an application's state. Why does this matter? Because sometimes, knowing just the current app state isn't enough; we want to know how we got there.

A pragmatic approach to getting the advantages of event sourcing is by recording an event log after data changes have already taken place. So you build your application just like developers are used to, but with a bit of extra functionality added around write operations. This way, you get the best of both worlds – an append-only log of state changes without sacrificing product velocity! This functionality can be added within the application or at the database level.

Application-level tracking

Writing some application code to track data changes is the simplest approach, but comes with some drawbacks. Common libraries like paper_trail and django-simple-history use callbacks for additional inserts during write operations. Apart from introducing runtime performance overhead, this approach compromises reliability since updates made outside the app stack aren't captured.

Database-level tracking

Tracking data history at the database layer is the most reliable. In PostgreSQL, this can be done with PGAudit, Audit Triggers, or using a pattern called Change Data Capture (CDC).

PGAudit: Sends detailed audit logs to the standard PostgreSQL output logs, but doesn't record events to a table.

Audit Triggers: Records changes to an audit log table, but runs synchronously in a transaction, impacting the primary DB instance's performance.

CDC: Recommended for scalability, asynchronously captures data changes by plugging into Postgres Write-Ahead Logs (WAL).

Although CDC is the generally preferred option, it still has drawbacks — it's the least simple to implement and lacks application context (where, who, how) behind a change. You can check out these architecture docs to see how we overcame these challenges at Bemi.

Subscribe to stay posted about the next blog, where I'll explain our architecture in greater detail!